CarePath Case Study

Successful use of AI to achieve recognized cost savings and improved health outcomes

Background

Today many organizations see mixed success from their attempts to use AI to deliver recognized value for healthcare. One team took a very different approach, focusing on low cost methods of training LLMs on medical claims in a secure environment with carefully vetted and skilled individuals.

The approach ultimately proved successful, providing significant reductions in medical expense and operating expense while also reducing hospital readmissions by 60%. The approach was independently audited and the results of the approach were independently confirmed.

The lessons and insights from this experience are shared here.

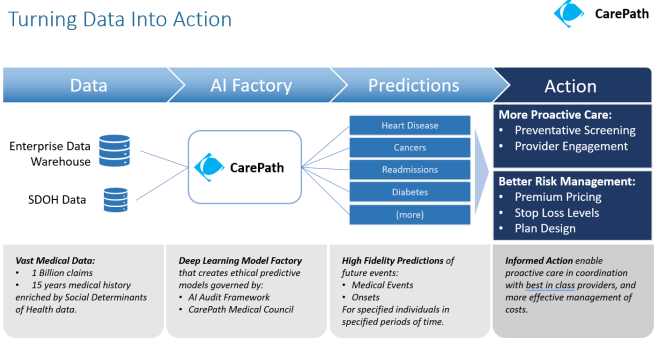

The CarePath model factory approach

During the 2019-2022 period, the Innovation Garage AI team led by Mitch Quinn developed a low cost deep learning approach for training a series of Large Language Models on medical claims data using a model factory approach that focused on optimizing the costs of turning out deep learning models. The training data for these models consisted of just over a billion medical claims occurring over a 10 year period. This training data was also enriched with Social Vulnerability Index (SVI) data.

Problem to solve

The medical team that partnered with the Innovation Garage had long relied on the LACE Index - a widely used clinical tool - predict the risk of readmission following a hospital discharge. The team stated that this approach brought back too many false positives - more than the team could actually get to in a week given the number of nurses they had. They also noticed that the people they were reaching out to were often not at risk. They had set a goal for themselves to find a more cost effective way to predict readmissions and provide more effective intervention to those at risk.

This problem gave the CarePath team a clear objective to focus on. The team worked in close partnership with a team of medical doctors and clinicians (including the care management team noted above). This group convened weekly workshops to evaluate the individual outputs of the latest CarePath models against specific patient cases and known progression patterns or comorbidities, and check for medical accuracy and acceptability. This created an efficient feedback loop that allowed models to be refined and improved more rapidly. Models flagged with unacceptable outputs were retrained and then reviewed the subsequent week.

Using this approach the team demonstrated a more reliable ability to predict medical onsets and events that was significantly better than traditional analytics and ML techniques.

The model training process was also optimized for lowest possible cost using the AWS spot market. This removed any possible barriers to model refinement. For the teams each LLM was disposable. The average training run went for 12 - 24 hours and cost about $20. This provided a very efficient continuous model training capability. At one point, an attempt was made to install the CarePath factory in an on-prem datacenter with conventional servers. The first training run was aborted because all calculations indicated that it was going to last 72 days.

Clinical governance

The team established an independently chaired governance function - the CarePath Medical Council (CMC) - comprised of legal, privacy, security, brand/reputation as well as clinical and technical expertise. With input from medical doctors, data scientists from multiple healthcare organizations, the council established a charter and voting rules to ensure that outputs from the models would have a human in the loop, and use of the outputs would not result in bias or harm.

This governance function operated with several recommended principles:

- Clear separation between the creators (those creating models or technology solutions) vs the inspectors (those responsible to judge whether this model or solution was truly ready for use in real world healthcare operations). Creators were not allowed to influence outcomes.

- Clear voting rules with tiebreaker provisions that ensured that medical expertise had unconstrained ability to steer outcomes based on medical safety and clinical acceptability.

- Ultimate Veto Power. The CMC had complete and recognized authority to stop a project and prevent its use in real world healthcare operations regardless of other interests or initiatives.

The AI Booth Institute at the University of Chicago was engaged to perform an audit of the CarePath models to check for potential for bias or harm. This was an excellent experience in practical accountability for the CarePath team, and the team received a good insights from the Booth Institute mentors. The audit found no indications of bias or harm in the models or the way they were being used.

Deployment

The team deployed CarePath models to support a Complex Care Management Hospital to Home program. The program sought to reduce instances where a patient went home after a hospitalization but then suffered a relapse or complication and had to be rehospitalized.

When prior authorizations were submitted for hospital treatments, the patients represented by those prior authorizations were added to a list. The claims histories of these patients were then passed to the CarePath model for evaluation. Based on this the model returned a likelihood of readmission. Those with likelihoods above a specific threshold were prioritized for outreach - which often occurred before their actual hospitalization. By contacting the patients proactively and establishing early support, patients were given additional reassurance and guidance - which ultimately made a significant difference in the health outcomes.

Results

Over the course of its deployment from 2020-2022 the CarePath program achieved the following independently validated results:

- Increased effective engagement rates by 57%

- Decreased 90 day hospital readmissions by 60%

- Positive feedback from both clinical teams and patients.

- Significant recognized annual savings (medical expense and operating expense).

References, Press and Recognition

- The Evidence based impact of the Hospital to Home Program.

- Interview with the AI team and medical team explaining the approach.

- Winner of the 2021 ISSIP Excellence in Service Innovation Award. The ISSIP Innovation Award submission (PDF here)included a description of how the team applied LLMs trained on claims to address the challenge of preventable relapses and hospital readmissions.

- Additional press & recognition: Innovation Garage approach, CarePath award announcement. NCTech Award Announcements.

- BCBSNC Blog post describing the program success (this site was down as of Jan 2026)

Part of the CarePath team at the NCTech Beacon Award event. More NCTech Awards Event Photos.

See also Colorado School of Public Health, University of Colorado Anschutz Medical Campus. (2022). Evaluation of Complex Case Management (CCM) and Hospital to Home (H2H) Interventions. Aurora, CO: Richard C. Lindrooth, Ph.D.